Research

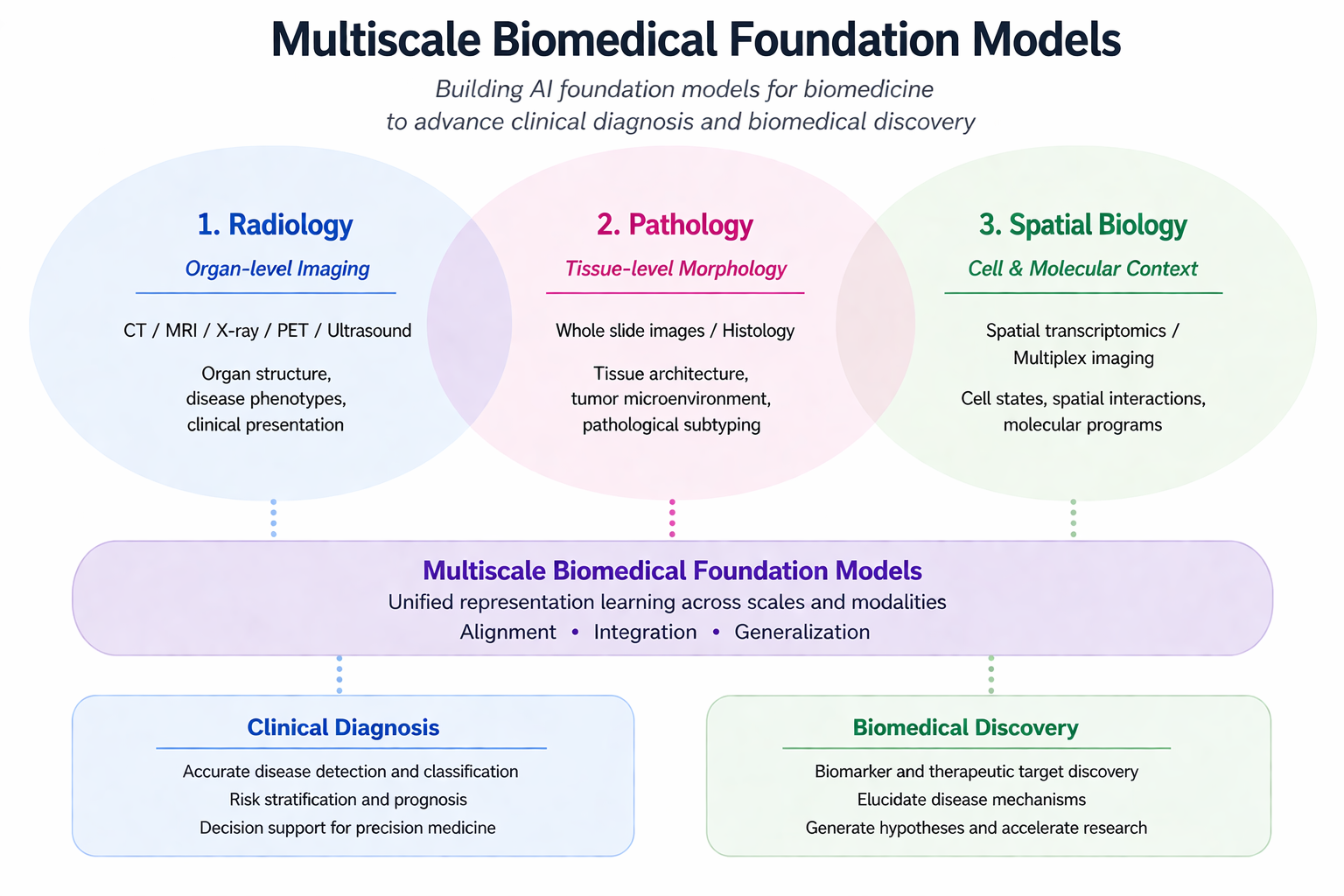

I build AI foundation models for biomedicine that span scales — from organ-level radiology, through tissue-level pathology, down to the cell-and-molecular context of spatial biology — and translate them into tools that close the loop between clinical diagnosis and biomedical discovery.

My current work targets two long-term directions:

- Whole-patient foundation models that integrate imaging, pathology, clinical notes, and longitudinal signals to support diagnosis and treatment-response prediction.

- Spatial-omics foundation models that unify transcriptomics, proteomics, and morphology to enable biomarker discovery and disease-mechanism analysis.

Both directions are connected by generative modeling, vision-language models, and agentic reasoning as common interfaces across modalities.

Concrete projects across scales

- Radiology — ChexGen (NEJM AI 2026): a generative foundation model for chest radiography.

- Pathology — SlideChat (CVPR 2025): a vision-language assistant for whole-slide pathology.

- Spatial Biology — SP-Mind (ICML 2026): an autonomous reasoning agent for spatial proteomics.

- Generative & VL — MedITok (a unified tokenizer for medical image synthesis & interpretation), GMAI-VL-R1 (reinforcement learning for medical reasoning).

- Drug Discovery — DrugOOD (AAAI 2022 Oral, OOD benchmark for AI-aided drug discovery), SyNDock (multi-protein docking via learnable group synchronization).

Datasets & Benchmarks

Beyond models, I lead community benchmarks that establish standardized evaluation across scales.

- AMOS (NeurIPS 2022 Oral) — large-scale abdominal multi-organ segmentation; the most widely used multi-organ benchmark in the field.

- AMOS-MM (MICCAI 2024 Challenge) — the first multimodal CT analysis benchmark for report generation and visual question answering.

- DrugOOD (AAAI 2022 Oral) — out-of-distribution generalization benchmark for AI-aided drug discovery.

- AutoBench (ICLR 2024) — automatic benchmark using LLMs as aligners for evaluating biomedical vision-language models.

- GMAI-Reasoning10K — a high-quality 10K medical visual question-answering instruction dataset for training and evaluating medical reasoning.